Digital Scams Are Evolving – Is Your Response Strategy Evolving Too?

Posted on: October 31st 2025

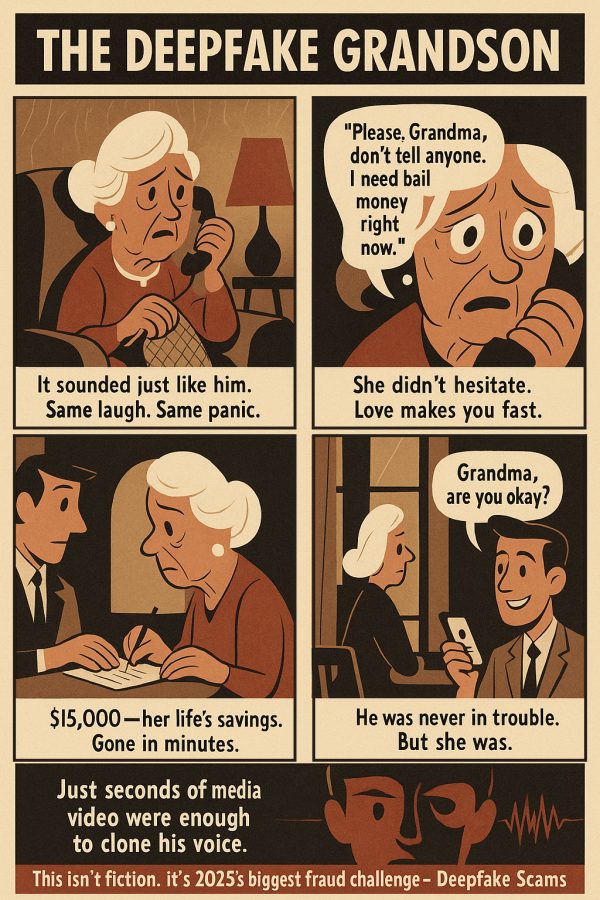

The grandmother’s voice trembled as she urged her “grandson” not to harm himself in jail. She withdrew $15,000—her entire savings- to post his bail.

It was a deepfake. Just seconds of a social media video were enough to clone his voice. He was never in trouble. But she was. This isn’t fiction. This is the biggest challenge for digital fraud prevention in 2025.

This grandmother’s story illustrates how AI-powered scams have transformed from simple phishing attempts into sophisticated deception campaigns. What happened to her could happen to anyone, and that’s what makes these scams so frightening.

Digital scams have evolved into an industrial enterprise, encompassing romance scams, investment scams, deepfake impersonations, and extensive imposter networks.

The threat extends across society, affecting retirees, students, professionals, businesses, public services, and even governments. No sector is untouched; healthcare, education, e-commerce, and beyond are all being exploited.

According to data from the Federal Trade Commission and international consumer protection agencies, global scam losses exceeded $50 billion in 2024. Still, the real damage runs deeper: shattered trust, lasting emotional trauma, and an increasingly vulnerable digital ecosystem.

In the United States alone, consumers reported $12.5 billion in fraud losses in 2024, a 25% jump from the previous year. Europe faced damages above €5.5 billion, with social engineering scams surging more than 300% year-on-year.

Read More: How to solve First-party fraud costing US banks billions

The 2025 Threat Landscape: AI-Powered and Industrialized

A threat landscape refers to the environment in which scams operate, encompassing the actors behind them, the tools they utilize, the channels they exploit, and the scale at which they operate.

In 2025, that landscape has changed completely. Scams are no longer one-off tricks but part of a global economy of fraud, industrialized through AI and organized networks.

Fraud kits sold online now include voice cloning, fake websites, and automated chat scripts. These “fraud-as-a-service” tools enable advanced scams for almost anyone, lowering the barrier to entry and increasing the number of attacks. Criminal groups coordinate across borders, sharing data and infrastructure to make their schemes harder to trace.

Tactics have also become more calculated. Scammers no longer send random, generic messages. Instead, they profile victims, mimic their digital behaviour, and time their approach to appear as credible as possible.

A few seconds of audio is enough to build a convincing fake voice. These deepfakes, combined with phishing emails, text messages, and social media outreach, create attacks that blend seamlessly into daily life and are almost impossible to spot.

The effect is visible worldwide. In 2024, the FTC received 6.5 million consumer fraud reports, with text scams alone resulting in $470 million in losses.

Europol notes a sharp rise in organized online fraud across Europe (Europol IOCTA 2024), while regulators in the Asia-Pacific region warn that scam losses are already outpacing cybersecurity budgets.

Together, these signals confirm that digital scams are not isolated events but part of a shifting global threat environment.

The Digital Scam Arsenal – Six Critical Threats Reshaping 2025

The scam landscape has weaponized AI, creating a new generation of threats that blur the line between authentic and artificial. Here are the six most devastating digital scams organizations must defend against in 2025:

1. AI-Powered Deepfake Impersonation: The New Identity Crisis

The Threat: Fraudsters can now clone anyone’s voice or appearance with just seconds of source material. In Hong Kong, scammers used AI-generated video calls to impersonate company executives on Zoom, convincing employees to transfer millions within minutes.

How It Works: A short clip of someone’s voice or face is enough to build a fake version. Scammers then use this clone on phone calls or video meetings to trick people into believing the interaction is real.

Enterprise Impact: These scams undermine trust in digital communication and expose vulnerabilities in executive authentication protocols. In 2024, global deepfake-related scams were estimated to have caused over $250 million in direct losses, and reports of deepfake fraud attempts rose nearly tenfold from 2022 to 2023.

2. Pig Butchering 2.0: Long-Term Investment Manipulation

The Threat: Romance scams have evolved into months-long investment frauds. Victims are groomed emotionally and financially before being drawn into fake crypto or trading platforms.

How It Works: Fraudsters spend weeks or months engaging with victims, often using AI chatbots to maintain conversations. Once trust is built, they guide victims to fake trading or crypto platforms where money is stolen.

Impact: Investment scams were the most costly type of fraud in the U.S. in 2024, resulting in $5.7 billion in losses. Globally, pig-butchering rings operating from scam compounds across Southeast Asia are estimated to defraud victims of billions each year.

3. Text, Task, and Job Scams: Fraud Through Everyday Messaging

The Threat: Scams that start as simple text messages or “easy online jobs” now rank among the fastest-growing fraud types. Scammers lure victims with small payouts or fake tasks and then pressure them to send larger sums.

How It Works: Victims receive messages offering small online jobs, delivery tasks, or “quick earnings.” Scammers make the first payments appear legitimate, but soon they demand upfront fees or larger investments that they never return.

Impact: In 2024, text-based scams cost U.S. consumers $470 million, over five times higher than in 2020. Task-based job scams added another $220 million in losses in just six months.

4. Fraud-as-a-Service Marketplaces: The Industrial Engine

The Threat: Scammers no longer need technical expertise. Online marketplaces sell ready-made toolkits for phishing, impersonation, and fake websites, while botnets provide scale.

How It Works: Criminal groups sell ready-made kits online that include fake websites, cloned voices, and automated scripts. Even inexperienced scammers can purchase these inexpensive tools and conduct large-scale fraud operations.

Impact: This democratization of deception explains why scam volumes are outpacing defenses. Global phishing incidents surged 466% in Q1 2025, coinciding with a 186% jump in breached data that feeds these toolkits.

5. Crypto Investment Deepfakes: Celebrity Impersonation at Scale

The Threat: Fraudsters create deepfake videos of celebrities and business leaders endorsing fake cryptocurrency schemes. They promote these videos through social platforms and paid ads to attract large audiences.

How It Works: AI-generated videos of celebrities or business leaders are circulated on social media, promoting fake crypto schemes. Scammers direct victims to polished websites that appear to be legitimate exchanges, but they steal the deposits into scam wallets.

Impact: Crypto-related investment scams reached $5.7 billion in reported U.S. losses in 2024, with a growing share tied to fake celebrity endorsements. Europol and regional regulators warn that crypto scams are now among the most damaging types of fraud worldwide (Europol IOCTA, 2024).

6. Digital Arrest Scams: Psychological Warfare Through Technology

The Threat: First seen in Asia, these scams utilize fake law enforcement calls or videos to convince victims that they face imminent arrest unless they pay.

How It Works: Scammers pose as police or court officials, using fake documents and deepfake voices to pressure victims. They threaten to arrest victims unless the victims pay immediately, exploiting fear to force compliance.

Impact: The scams have resulted in hundreds of millions of reported losses in Asia, particularly among elderly citizens and immigrants unfamiliar with local laws and regulations. Authorities warn of migration to Western markets, raising concern that losses will escalate globally.

7. The Common Thread: AI as the Great Equalizer

One factor stands out across scams: AI. What once required expertise can now be purchased as a toolkit, allowing small actors to run enterprise-level fraud. Traditional defenses, built for human-scale threats, are struggling to keep pace with this new level of deception.

This is why enterprises need defenses that evolve as fast as the scams themselves, and where Straive is making a measurable impact.

Real-World Results – How Straive Is Making an Impact

Detecting digital scams today means going beyond obvious red flags. Scammers often hide their schemes in subtle behavioral patterns, transaction anomalies, and synthetic content spread across voice, video, social media, and payment networks. Traditional systems struggle because the signals are fragmented, evolve in real time, and often mimic legitimate activity.

Straive’s AI-led solutions spot what others miss. By combining machine learning, behavioral analytics, and real-time monitoring, Straive enables institutions to detect scams before they succeed, whether deepfake impersonation, pig-butchering rings, or fake job networks.

Case 1: Global Merchant Scam Detection

Challenge: A leading financial services firm faced billions of dollars in attempted fraud across various regions, including the Asia-Pacific, North America, Europe, and Latin America. Scammers exploited payment gaps using fake merchants, job ads, and crypto schemes.

Solution: Straive built an AI/ML-powered framework to compare merchant behavior at scale, identifying high-risk merchants through signals such as low repeat customers, high decline rates, abnormal chargeback percentages, and elevated LLM-based risk scores.

Results:

- Systematic detection of scam-linked merchants across multiple geographies

- Significant reduction in fraudulent transactions and disputes

- Increased ability to block coordinated scam networks before execution

Case 2: Real-Time Fraud Blocking for a Leading Financial Services Firm

Challenge: A leading financial services firm struggled with escalating fraudulent merchant activity that traditional monitoring methods could not detect quickly enough.

Solution: Straive designed and ran a 24/7 fraud operations center powered by AI and dynamic rules-based blocking. The system continuously analyzed merchant behavior, transaction flows, and ecosystem risk factors to detect fraud attempts in real time.

Results:

- Over $1 billion in fraud savings delivered to the network

- Real-time blocking of fraudulent merchant activity

- Reduced disputes and stronger protection for end customers

Case study:

$1B+ in Fraud Savings by blocking fraudulent activities in real-time.

Straive: Operationalizing AI to Keep Financial Institutions One Step Ahead of Digital Scams

At a time when digital scams are scaling into a trillion-dollar industry, organizations need more than detection; they need a partner who can outpace fraud with intelligence, scale, and precision. That’s where Straive stands apart.

- AI Operationalization at Scale: From pilots to enterprise-grade systems, Straive turns AI into real-time fraud prevention across payments, voice, video, text, and merchant networks.

- Global Reach, Regional Depth: Operations in North America, Europe, Asia-Pacific, and emerging markets, with teams tuned to local scam patterns.

- Proven Scale: 180,000+ professionals worldwide, including 700+ specialists in content accessibility, data, and AI.

- Cross-Sector Strength: Safeguarding financial services, e-commerce, social platforms, healthcare, and education.

Straive enables organizations to stay proactive, not reactive, outsmarting AI-driven fraud and securing trust at scale.

About the Author

Straive helps clients operationalize the data> insights> knowledge> AI value chain. Straive’s clients extend across Financial & Information Services, Insurance, Healthcare & Life Sciences, Scientific Research, EdTech, and Logistics.

Share with Friends: