How to Explainably Identify Adverse Events in Clinical Trial Data

Posted on: April 23rd 2026

A patient reports mild joint pain a few days after treatment, briefly noted in a longer clinical note. Easy to overlook at first glance, but would you flag it as a potential safety signal?

In many clinical trials, this is exactly how adverse events appear, not as clearly labeled entries, but as scattered signals across patient narratives, site notes, and observational records. Interpreting these signals consistently becomes difficult as data volumes grow and timelines tighten, making it harder for teams to identify, validate, and classify safety events with confidence.

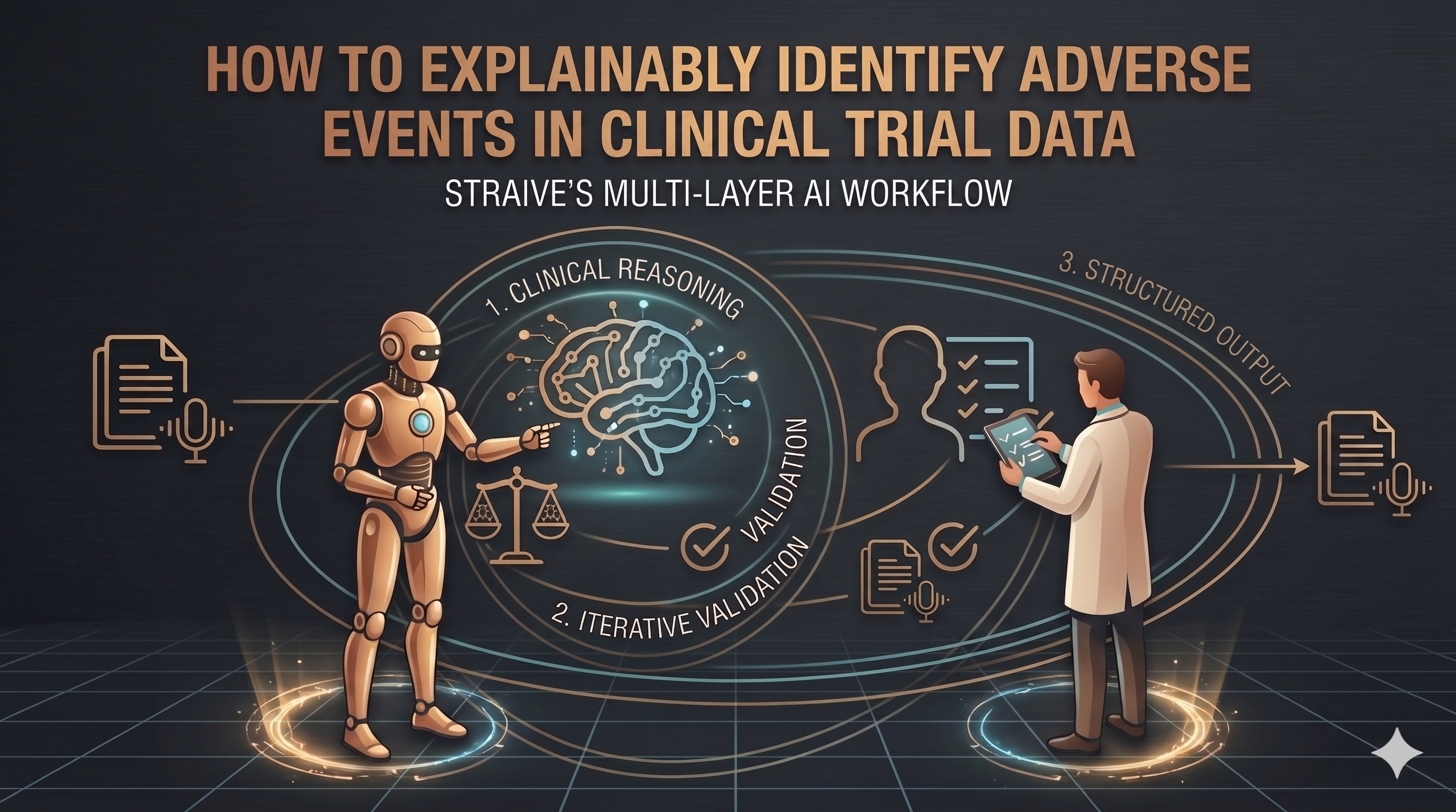

Straive operationalizes this process through multi-layer AI workflows that combine clinical reasoning, iterative validation, and structured outputs, enabling precise, explainable, and scalable adverse event identification.

The Challenge of Adverse Events in Clinical Trials

Clinical trials now capture growing volumes of patient-reported and unstructured data across multiple sources. The World Health Organization estimates that healthcare systems capture only 6 to 10 percent of adverse drug reactions, showing how easily teams miss critical safety signals.

Clinical teams must accurately and on time identify adverse events to protect patient safety and ensure reliable study outcomes. As fragmented data buries safety signals, teams struggle to detect them consistently.

Pharmacovigilance processes operate under strict regulatory scrutiny, where every classification must be traceable and defensible. This pressure forces teams to maintain high levels of accuracy and consistency.

Missed or misclassified adverse events can delay risk detection and create compliance challenges. Even small gaps in interpretation can have a significant downstream impact.

Why Traditional Safety Review Methods Fall Short

Traditional safety review methods struggle to keep pace with the growing volume and complexity of clinical trial data. As reliance on manual processes and fragmented systems continues, limitations in speed, consistency, and transparency become increasingly evident.

Heavy Reliance on Manual Review

Reviewing free-text clinical narratives requires significant time and effort, slowing down the overall safety evaluation process. As data volumes increase, this dependency places growing pressure on already stretched clinical and safety teams.

Inconsistent Interpretation

Individual judgment often drives adverse event identification, creating differences in how reviewers interpret signals. This variability across reviewers and studies can result in inconsistent classifications and overlooked risks.

Limited Transparency in AI Models

Many AI systems lack clear reasoning behind their outputs, making their decisions difficult to interpret. This lack of transparency creates challenges when teams need to justify classifications during audits and regulatory reviews.

The Need for Explainable, Multi-Layered AI in Pharmacovigilance

As data grows, you cannot rely on automation alone. Organizations need AI that detects, explains, and withstands regulatory scrutiny. Here are the key reasons driving this need.

Balancing Automation with Clinical Reasoning

AI scans large volumes of clinical narratives and quickly flags potential adverse events. When combined with domain-trained models and expert validation, it ensures signals are interpreted correctly within the right clinical context.

Ensuring Transparency and Traceability

Every classification must be backed by clear reasoning and source data. Explainable AI provides traceable links and decision logic, helping teams review outputs and confidently defend them during audits.

Generating Structured, Regulator-Ready Outputs

AI systems convert unstructured signals into standardized, well-classified outputs. This reduces manual effort, speeds up reporting, and aligns outputs with regulatory formats from the start.

Scaling Across Studies Without Losing Consistency

As trial volumes increase, maintaining consistency becomes harder with manual review. Multi-layered AI applies uniform logic across datasets, reducing variability and improving reliability across studies.

Enabling Continuous Learning and Improvement

AI systems improve as they learn from new data and expert feedback. This allows models to adapt to evolving clinical patterns and regulatory expectations without disrupting existing workflows.

A Multi-Agent AI Workflow for Explainable Adverse Event Identification

The need for explainable, multi-layered AI becomes real at the workflow level. Straive operationalizes this through a coordinated multi-agent system in which each layer validates, refines, and strengthens the outcome.

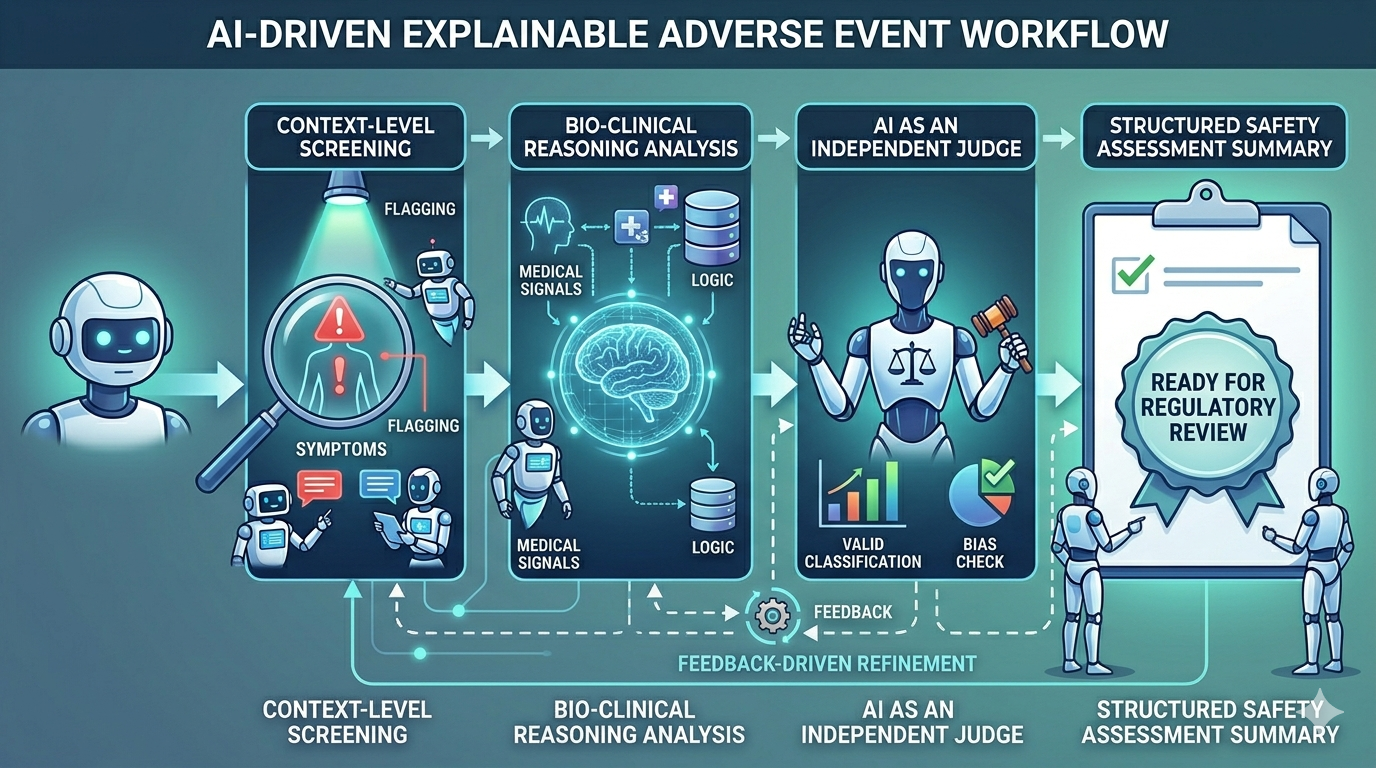

Context-Level Screening

The workflow begins by scanning clinical narratives to detect potential safety signals. It flags relevant language, symptoms, and contextual cues that may indicate an adverse event.

Bio-Clinical Reasoning Analysis

These flagged signals are then evaluated using deeper clinical logic. The model assesses whether they meet safety event criteria based on medical context and domain knowledge.

AI as an Independent Judge

An independent model further reviews the evaluated outputs. It applies predefined standards to ensure consistency, remove bias, and validate classification decisions.

Feedback-Driven Refinement

Based on this evaluation, structured feedback is generated and applied. The system refines earlier outputs iteratively to improve precision and reduce ambiguity.

Structured Safety Assessment Summary

Once validated and refined, the final outputs are consolidated into a standardized format. Each classification includes clear reasoning and is ready for regulatory review and system integration.

Business Impact for Clinical and Safety Teams

Clinical data complexity is outpacing traditional safety workflows, making AI-driven models essential for scalable and reliable decision-making. McKinsey highlights that AI-led approaches are becoming critical to manage this complexity, improving efficiency and enabling more reliable decision-making in clinical and safety operations.

Faster Review of Patient Feedback

Automated screening and layered validation accelerate the identification and confirmation of safety signals across large datasets.

Reduced Manual Workload

Repetitive review tasks are minimized through AI-driven processing, allowing clinical teams to focus on higher-value analysis and decision-making.

Improved Classification Accuracy

Multi-layer validation and clinical reasoning reduce variability, leading to more reliable and accurate identification of adverse events.

Transparent, Audit-Ready Documentation

Each classification is supported by clear reasoning and traceable data, simplifying audit readiness and regulatory review.

Stronger Compliance with Pharmacovigilance Standards

Standardized outputs and consistent workflows help ensure alignment with evolving global safety and reporting requirements.

Operationalizing Explainable Safety Intelligence from Clinical Data

In pharmacovigilance workflows, clinical narratives are not isolated inputs but signals that require interpretation, validation, and structure. At Straive, this takes shape through AI-driven systems that convert fragmented data into transparent, decision-ready safety intelligence.

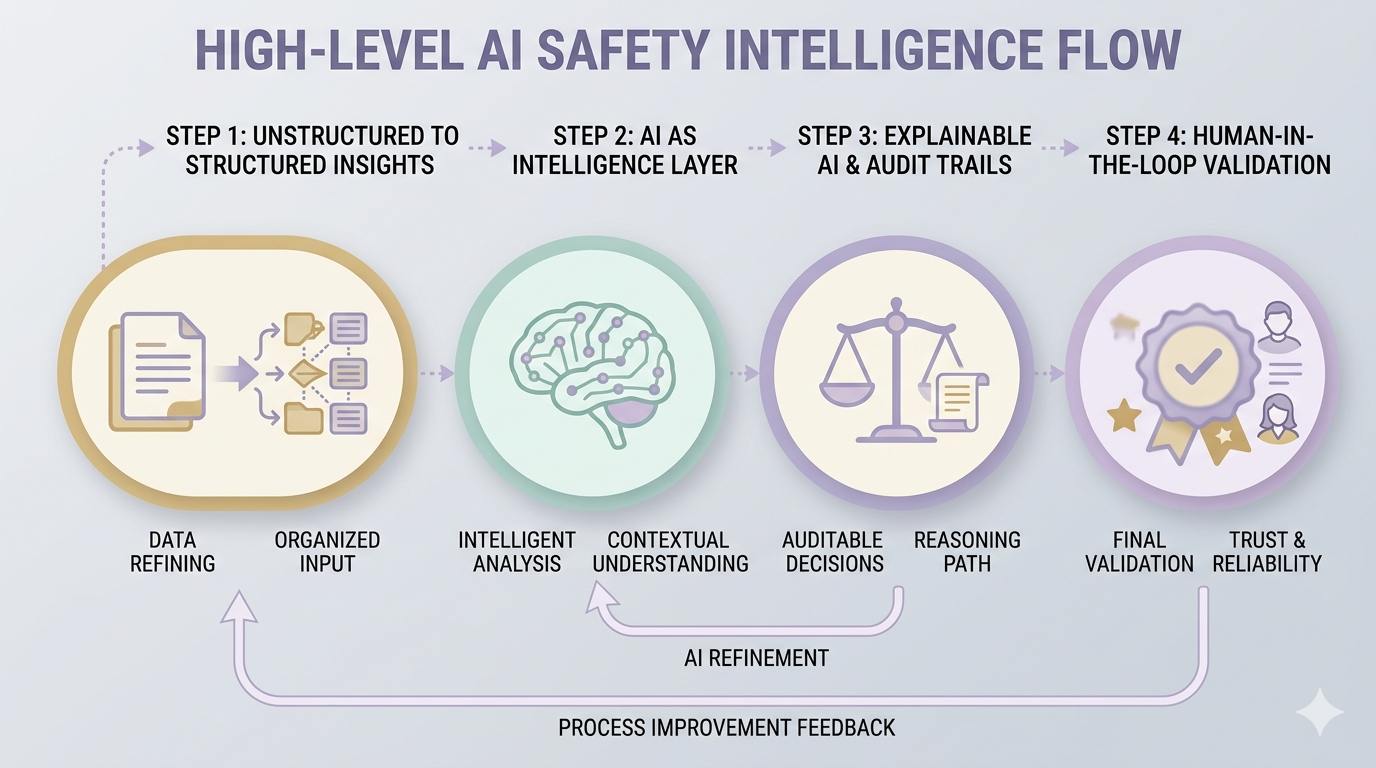

From Unstructured Data to Structured, Usable Insights

Straive transforms clinical narratives into structured, analysis-ready data through AI-powered pipelines. This enables consistent extraction, normalization, and interpretation of safety signals across diverse data sources.

AI as an Intelligence Layer, Not Just Automation

At Straive, AI functions as an intelligence layer, interpreting signals within a clinical context rather than performing surface-level extraction. This supports more informed, consistent, and scalable safety assessments.

Explainable AI with Audit Trails and Compliance

Each classification is supported by transparent reasoning and traceable data points. Straive’s approach embeds explainability and audit readiness into workflows aligned with regulatory expectations.

Human-in-the-Loop Validation and Feedback Loops

Expert validation remains integral within Straive’s workflows, enabling continuous refinement of outputs. Feedback loops strengthen reliability while maintaining the level of trust required in safety monitoring.

See It in Action

Explainable, multi-agent AI overcomes the limitations of fragmented data and manual review. It detects, validates, and structures safety signals with greater speed, consistency, and transparency in regulated environments.

Straive brings together clinical reasoning, layered validation, and audit-ready outputs to enable more confident and defensible safety decisions at scale.

Request a demo to see how Straive can strengthen adverse event identification across your clinical workflows with explainable, AI-driven systems.

Santosh Shevade is a Principal Data Consultant at Gramener – A Straive Company. With deep expertise in healthcare strategy, digital health, and clinical development and operations, he has supported nearly 50 clinical development programs across all clinical phases. His experience spans advanced analytics solution design for pharmaceutical companies, mHealth implementation, and AI applications in healthcare. Previously at Novartis and Johnson & Johnson, Santosh led global clinical development teams and streamlined data review processes for major regulatory submissions. A certified MBTI trainer and leadership coach, he serves as visiting faculty at ISB Hyderabad and Welingkar Institute, focusing on healthcare technology innovation and biopharma strategy.